Why IBM SVC and EMC VPLEX are not the same.

Posted on August 5, 2012 by TheStorageChap in VPLEX

Whilst EMC VPLEX and IBM SAN Volume Controller (SVC) may, after a cursory glance, appear to be similar technologies that have similar use cases it is not so black and white, the reality is that these solutions were conceived with very different aims in mind and with very different architectures.

IBM SVC

IBM SVC was designed as a single site storage virtualisation solution that enabled less capable storage arrays to be pooled behind the virtualisation architecture and enabled with additional write caching and non-disruptive data mobility features. IBM SVC development has more recently added features such as auto-tiering and thin provisioning, transforming SVC into a storage controller type solution with embedded features (e.g. V7000), and a standalone solution that can enable storage commoditisation use cases across heterogeneous storage arrays.

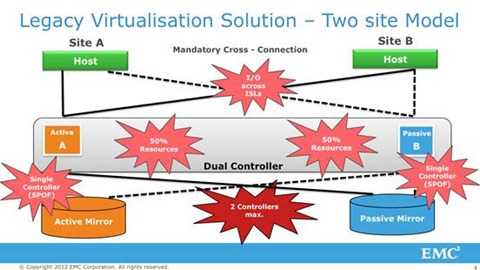

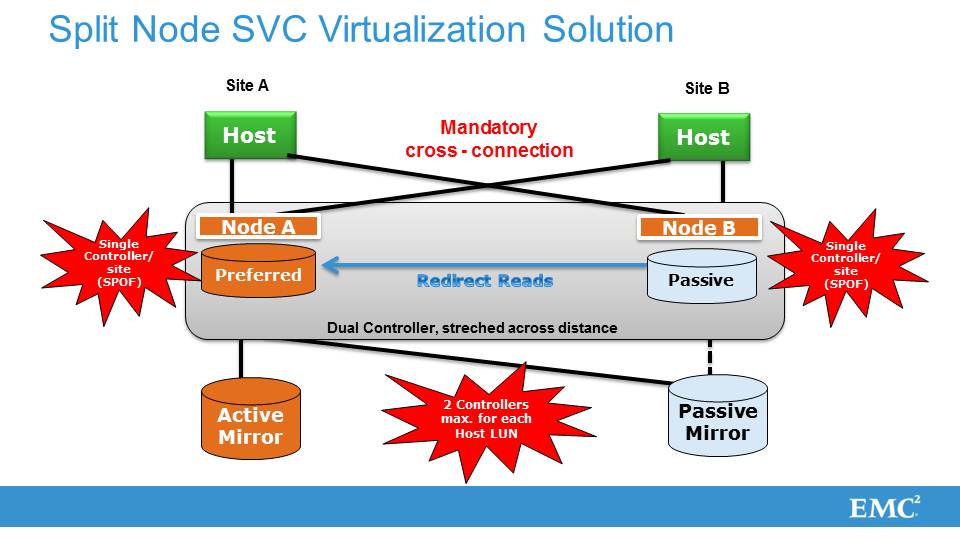

The architecture is based on I/O groups of two SVC Nodes in an active/passive state, with an SVC Cluster consisting of up to four I/O Groups and eight SVC Nodes. An SVC Cluster can be configured in a ‘split node’ architecture where the two Node I/O group is split across physically separate sites using fibre channel and ISLs.

EDIT 25.10.2012: In fairness to a comment posted by IBM I have added the picture below specifically depicting an IBM Split Node SVC solution.

EMC VPLEX

EMC’s heritage and its position in the market place has been based on the development of storage platforms and inherent software features such as thin-provisioning, snaps, clones and FAST within those platforms. EMC VPLEX was conceived as a storage virtualisation and multi-site federation solution that could be used to further augment those existing technologies and enable greater levels of both data and application, availability and mobility.

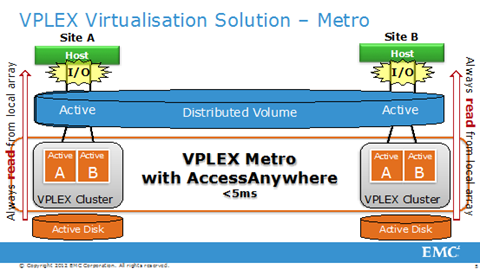

An EMC VPLEX Cluster consists of up to eight VPLEX Directors participating in a single I/O group. Each Director has four front-end and four back-end fibre channel ports with completely separate connectivity for inter-Director, inter-Engine and inter-Site communication. A completely separate, resilient VPLEX cluster can be created within a second datacentre and connected to the VPLEX Cluster within the first datacentre over either Fibre Channel or Ethernet with no requirement for merged fabrics. Once connected VPLEX enables the creation of local and distributed virtual volumes and in the case of the later, these volumes are concurrently read/write accessible across both datacentres in a true active/active architecture in which resources from both datacentres are utilised for production I/O.

Whilst VPLEX Local does not necessarily have all of the storage commoditisation features associated with IBM SVC, it does enable the key virtualisation use cases, of storage abstraction, storage pooling, data mobility and storage mirroring. For storage commoditisation use cases, EMC proposes the use of Federated Tiered Storage to enable the use of Tier 1 EMC storage software features across third-party arrays; which can then also, if required, additionally benefit from the use of VPLEX.

| EMC VPLEX Local | IBM SVC |

| All storage array, features and functionality are available behind VPLEX. | SVC eliminates the ability to use back-end array features and functionality, due to write caching. |

| VPLEX is an active/active architecture where a virtual volume can be active across multiple VPLEX Directors. | SVC is an active /passive two Node I/O Group where a volume can only be active from one SVC Node. |

| VPLEX maintains backend active/active array functionality. | SVC forces an active/active backend array into an active/passive solution, due to active/passive SVC Nodes. |

| VPLEX Directors can be added non-disruptively to an existing VPLEX Cluster and the new resources (e.g. read cache, bandwidth, IOPs) used across the Cluster. | I/O Groups are discreet silos and free resources in one I/O group cannot be leveraged in another. With SVC 6.4 IBM claims non-disruptive mobility between IO groups. This is not 100% accurate as this feature is only limited to Windows and Linux, does not support clustered servers and does not support VMware. Even in case of Windows they require a reboot. |

| VPLEX Directors have four front-end and four back-end ports that are dedicated to front-end and back-end I/O. | An SVC Node has only four HBAs per Node and they require two HBAs for cache mirroring leaving with only 1 HBA per fabric for production. Losing one of the HBAs is equivalent to losing one complete fabric. |

| VPLEX can use RecoverPoint CDP to enable corruption protection of virtualised volumes to non-virtualised disks that can be used to recover from even in the event of a total loss of the virtualisation technology. Alternatively snap and clone functionality at the array level can be fully utilised as VPLEX does not do write caching. | Snaps and clones can be completed using SVC and are used to recover in case of an outage, but how do you recover in the case that the virtualisation layer is gone? All your snaps and clones are also gone resulting in a need to restore the data from tape. |

| Non-disruptive data mobility across heterogeneous storage arrays can be paused, stopped and backed out, without any risk of data loss and with little performance impact. | Data mobility can have performance impacts during the actual mobility phase and the method of migration can lead to data loss during the migration in the event of a storage or site failure. |

| VPLEX Metro | Split-Node SVC Cluster |

| Independent, resilient VPLEX Clusters are federated across datacentres, using fibre or IP with no requirement for merged fabrics. | Two Node I/O groups are physically ‘split’ between datacentres leading to reduced resiliency within the datacentre. Fabrics must be merged between datacentres and multiple ISLs must be used to dual path all hosts across both SVC Nodes of the I/O Group adding fabric complexity and cost. |

| When using VPLEX with the minimum requirement of two Directors within a cluster, the loss of one Director does not require I/O to be redirected to the remote site as there is local redundancy. | When used in a split-node configuration SVC forces a multi controller array with multiple redundancies into a two Node solution with no redundancy per site. Losing one Node is similar to losing a site, IOs will have to cross ISLs to the remote site. |

| A write to a distributed virtual volume from either site is written once to the remote site, irrespective of which location the production application was being run from. | For each write IO, each appliance will have to do one write to the local backend, one write to the remote SVC Node and one write to the remote array, this is at least one round-trip more than VPLEX does. In case you run production on the non-preferred site you have to write to the Primary Node, mirror cache, write to local array and write to remote array making it two round trips more than VPLEX. |

Discussion · 24 Comments

There are 24 responses to "Why IBM SVC and EMC VPLEX are not the same.".Leave a Comment